Flourishing through AI

That AI (Artificial Intelligence) will have a major impact on society is no longer in question. Current debate turns instead on how far this impact will be positive or negative, for whom, in which ways, in which places, and on what timescale.

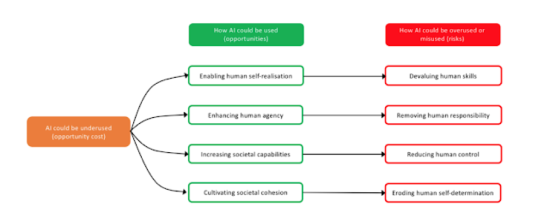

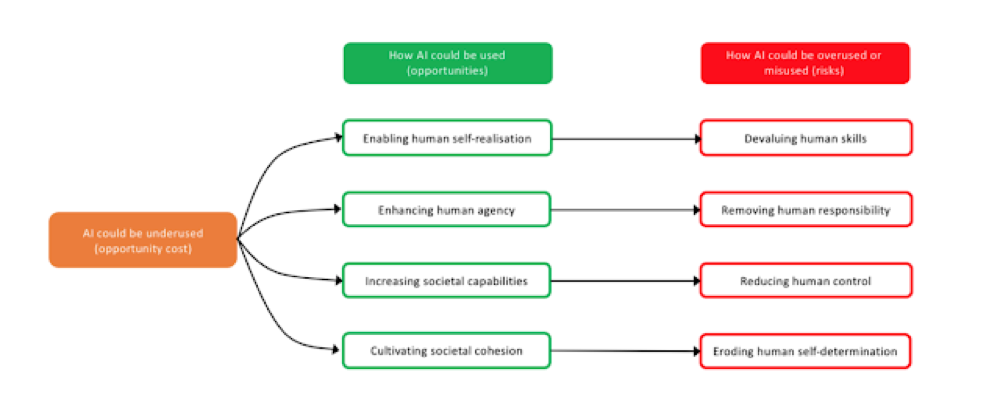

Main opportunities for society that AI offers address the following fundamental points in the understanding of human dignity and flourishing:

- who we can become (autonomous self-realization);

- what we can do (human agency);

- what we can achieve (societal capabilities); and

- how we can interact with each other and the world (societal cohesion).

In each case, AI can be used to foster human nature and its potentialities, thus creating opportunities, underused, thus creating opportunity costs, or overused and misused, thus creating risks.

One of the common assumptions is that the use of AI is synonymous with good innovation and positive applications of this technology. However, fear, ignorance, misplaced concerns or excessive reaction may lead a society to underuse AI technologies below their full potential, for what might be broadly described as the wrong reasons such as heavy-handed or misconceived regulation, or under-investment.

On the other hand, we must also consider the risks associated with inadvertent overuse or intentional misuse of AI technologies, grounded, for example, in misaligned incentives, adversarial geopolitics, greed, or malicious intent.

Let’s have a detailed look at these opportunities offered by AI:

Who we can become:

Enabling human self-realization, without devaluing human skills AI may enable self-realization, which includes the ability for people to flourish in terms of their own characteristics, interests, potential abilities or skills, aspirations, and life projects. More AI may easily mean more human life spent more intelligently. The risk in this case is not the obsolescence of some old skills and the emergence of new ones per se, but the pace at which this is happening. At the level of the individual, jobs are often intimately linked to personal identity, self-esteem, and social standing, all factors that may be adversely affected by redundancy, even putting to one side the potential for severe economic harm.

Fostering the development of AI in support of new skills, while anticipating and mitigating its impact on old ones will require both close study and potentially radical ideas, such as the proposal for some form of “universal basic income”, which is growing in popularity and experimental use.

What we can do:

Enhancing human agency, without removing human responsibility AI is providing a growing reservoir of “smart agency”. In this sense of “Augmented Intelligence”, AI could be compared to the impact that engines have had on our lives. Responsibility is therefore essential, in view of what sort of AI we develop, how we use it, and whether we share with everyone its advantages. Absence of responsibility may arise due to a wrong socio-political framework or a “black box” mentality, according to which AI systems for decision-making are seen as being beyond human understanding and hence control.

The relationship between the degree and quality of agency that people enjoy and how much agency we delegate to autonomous systems is not zero-sum, either pragmatically or ethically.

Human agency may be ultimately supported, refined and expanded by the embedding of “facilitating frameworks”, designed to improve the likelihood of morally good outcomes, in the set of functions that we delegate to AI systems. AI systems could, if designed effectively, amplify and strengthen shared moral systems such as in the example of “distributed morality” in human-to-human systems (e.g:peer-to-peer lending).

What we can achieve:

Human intelligence augmented by AI could find new solutions to old and new problems, from a fairer or more efficient distribution of resources to a more sustainable approach to consumption. Precisely because such technologies have the potential to be so powerful and disruptive, they also introduce proportionate risks. Increasingly, we may not need to be ‘on the loop’, if we can delegate our tasks to AI. However, if we rely on the use of AI technologies to augment our own abilities in the wrong way, we may delegate important tasks and above all decisions to autonomous systems that should remain at least partly subject to human supervision and choice. This in turn may reduce our ability to monitor the performance of these systems (by no longer being ‘on the loop’ either) or preventing or redressing errors or harms that arise (‘post loop’). There should be a balance between pursuing the ambitious opportunities offered by AI to improve human life and what we can achieve, on the one hand, and, on the other hand, ensuring that we remain in control of these major developments and their effects.

How we can interact:

From climate change and antimicrobial resistance to nuclear proliferation and fundamentalism, global problems increasingly have high degrees of coordination complexity. AI, with its data-intensive, algorithmic-driven solutions, can hugely help to deal with such coordination complexity, supporting more societal cohesion and collaboration. For example, efforts to tackle climate change have exposed the challenge of creating a cohesive response, both within societies and between them. The scale of this challenge is such that we may soon need to decide between engineering the climate directly and engineering society to encourage a drastic cut in harmful emissions. This latter option might be undergirded by an algorithmic system to cultivate societal cohesion.

One risk is that AI systems may erode human self-determination, as they may lead to unplanned and unwelcome changes in human behaviors to accommodate the routines that make people’s lives easier. AI’s predictive power and relentless nudging, even if unintentional, should be at the service of human self-determination and foster societal cohesion, not the undermining of human dignity or human flourishing.

Taken together, these four opportunities, and their corresponding challenges, paint a mixed picture about the impact of AI on society and the people in it. Accepting the presence of trade-offs, seizing the opportunities, while avoiding or minimizing the risks head-on will improve the prospect for AI technologies to promote human dignity and flourishing.

About the publisher

Data Driven Investor

Data Driven Investor (DDI) brings you various news and op-ed pieces in the areas of technologies, finance, and society. We are dedicated to relentlessly covering tech topics, their anomalies and controversies, and reviewing all things fascinating and worth knowing. DDI has only one mission: see what is coming, and do what is important – “NOW”.

Visit us at datadriveninvestor.com.

About the DDI Team

Dr. Justin S P Chan has a passion for clarity and synergy - seeing through the complexity of the intersecting spheres of technology, finance, innovation and social dynamics, to enable game-changing collaborations between entrepreneurs and innovative opportunities. Combining the vision of a true inventor and entrepreneur with his data-driven, evidence-based approach to investment, Justin also co-founded OCIM and serves as Chief Investment Officer for its fund management platform. Within OCIM, He co-manages OC Horizon Fintech, a transformational hedge fund, where he blends real applications, expertise and future-awareness into truly exceptional investment performance. Justin gains inspiration for these projects from his global network of contacts in investment and fintech communities, where he stays on the pulse of fast-moving conversations and trends affecting global markets and emerging technologies.

John DeCleene is a fund manager for OCIM’s fintech fund, and currently progressing towards becoming a CFA charter holder. He loves to travel for business and pleasure, having visited 38 countries (including North Korea); he represents the new breed of global citizen for the 21st century. Whilst having spent a lot of his life in Asia, John DeCleene has lived and studied all over the world - including spells in Hong Kong, Mexico, The U.S. and China. He graduated with a BA in Political Science from Tulane University in 2016.

Contact

General: [email protected]

John DeCleene: [email protected]

Phone: (+65) 8420 4779

Justin Chan: [email protected]

Phone: (+65) 9129 2832